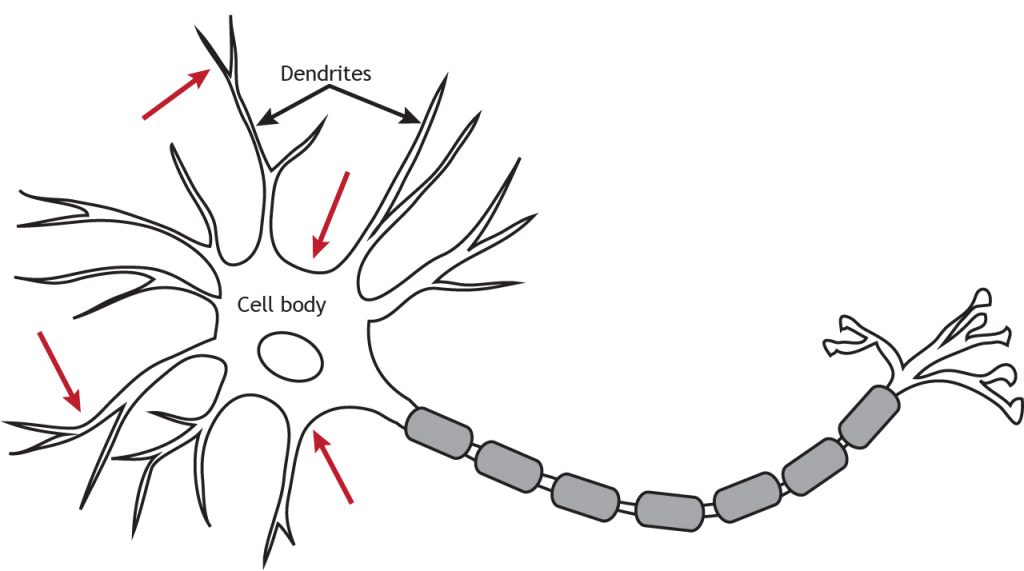

Interestingly, the workhorse of deep learning is still the classical backpropagation of errors algorithm (backprop Rumelhart et al., 1986), which has been long dismissed in neuroscience on the grounds of biologically implausibility (Grossberg, 1987 Crick, 1989). Machine learning is going through remarkable developments powered by deep neural networks (LeCun et al., 2015). Overall, we introduce a novel view of learning on dendritic cortical circuits and on how the brain may solve the long-standing synaptic credit assignment problem. Moreover, our framework is consistent with recent observations of learning between brain areas and the architecture of cortical microcircuits. We demonstrate the learning capabilities of the model in regression and classification tasks, and show analytically that it approximates the error backpropagation algorithm. Through the use of simple dendritic compartments and different cell-types our model can represent both error and normal activity within a pyramidal neuron. Such errors originate at apical dendrites and occur due to a mismatch between predictive input from lateral interneurons and activity from actual top-down feedback. In contrast to previous work our model does not require separate phases and synaptic learning is driven by local dendritic prediction errors continuously in time.

Here, we introduce a multilayer neuronal network model with simplified dendritic compartments in which error-driven synaptic plasticity adapts the network towards a global desired output.

However, the main learning mechanism behind these advances – error backpropagation – appears to be at odds with neurobiology. Deep learning has seen remarkable developments over the last years, many of them inspired by neuroscience.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed